We are going to use this dags folder to place our python workflows. Once your containers are up and running, a dags folder is created on your local machine where you placed the docker-compose.yml file. Once you download the docker-compose file, You can start it using the docker-compose command. The official site provides a docker-compose file with all the components needed to run Airflow. Pretty simple workflow, But there are some useful concepts that I will explain as we go. Print the extracted fields using the bash echo command.Creating an Airflow DAG.Īs an example, let's create a workflow that does the following:

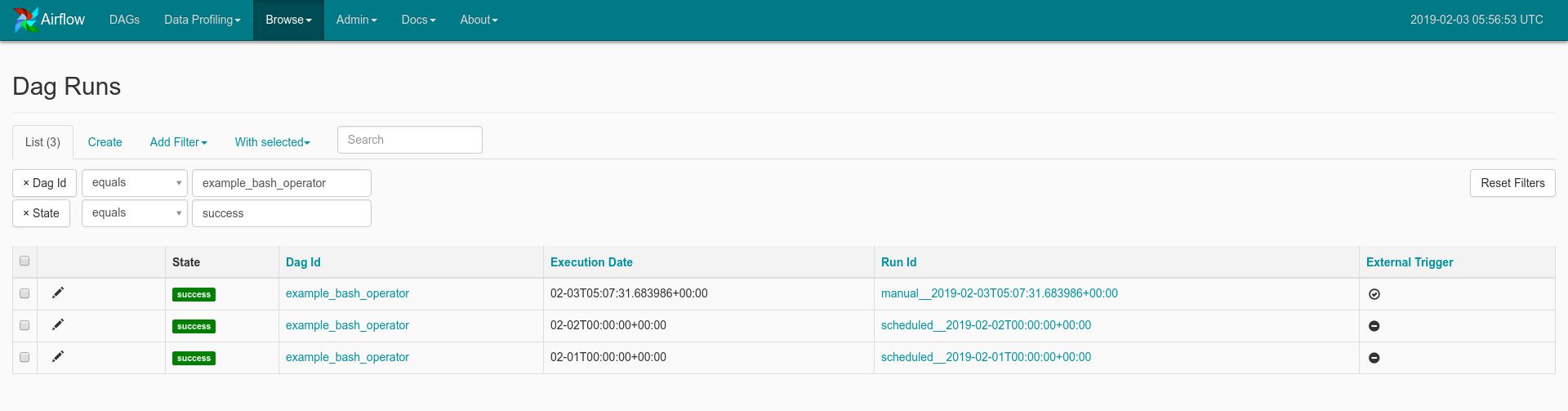

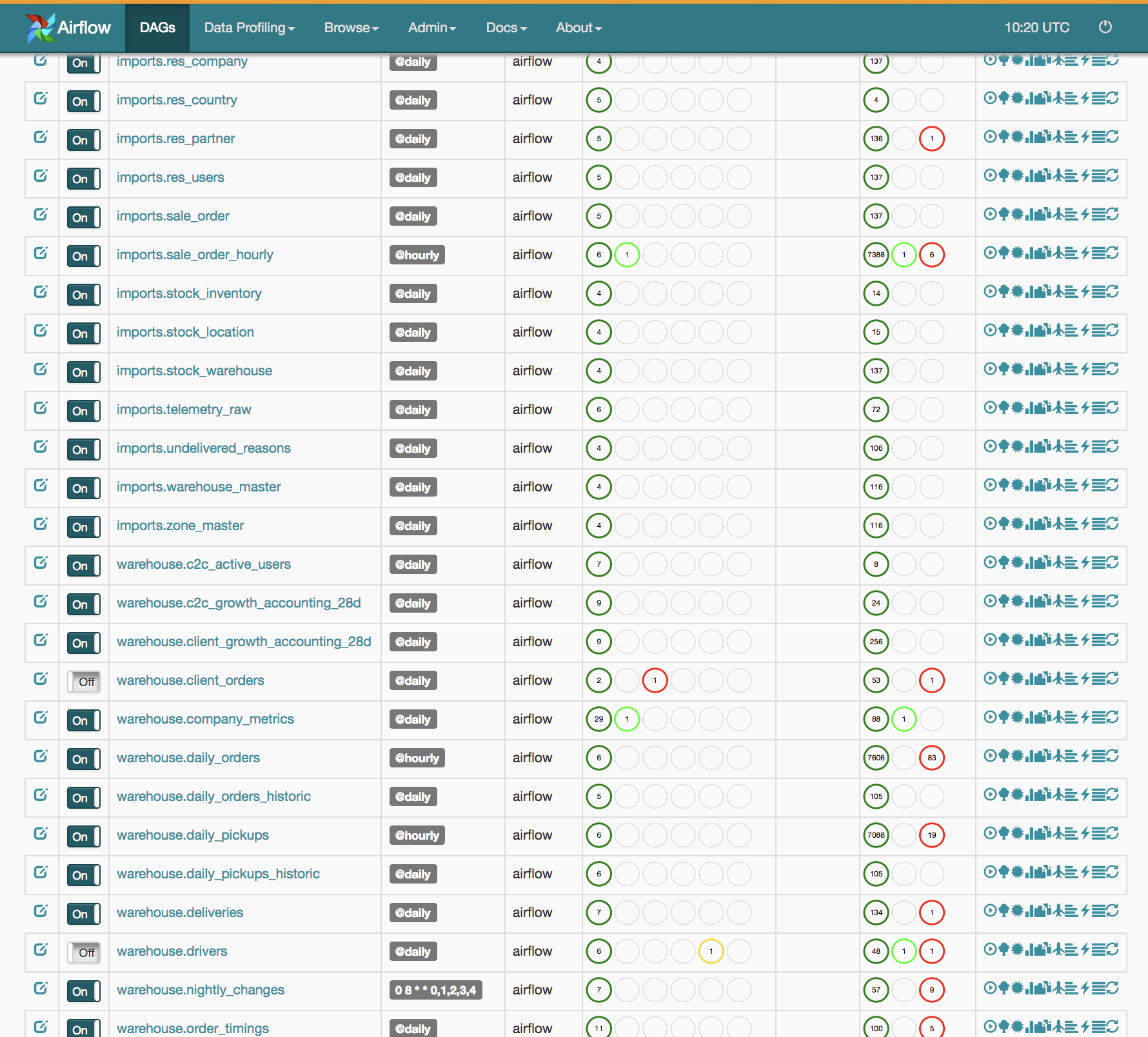

Let’s do something exciting, i.e., create our first Airflow DAG. You can find more operators here.įinally, with the theory done. There are quite a few other operators that will help you make an HTTP call, connect to a Postgres instance, connect to slack, etc. DummyOperator - used to represent a dummy task.BranchOperator - used to create a branch in the workflow.PythonOperator - used to run a python function you define.BashOperator - used to run bash commands.These classes are provided by airflow itself. Now, what is an operator? An operator a python class that does some work for you. Key ConceptsĪ workflow is made up of tasks and each task is an operator. Well, not difficult but pretty straightforward. Once you create Python scripts and place them in the dags folder of Airflow, Airflow will automatically create the workflow for you. The workflow execution is based on the schedule you provide, which is as per Unix cron schedule format. They are usually defined as Directed Acyclic Graphs (DAG). Workflows are created using Python scripts, which define how your tasks are executed. It then handles monitoring its progress and takes care of scheduling future workflows depending on the schedule defined. """ # Tutorial Documentation Documentation that goes along with the Airflow tutorial located () """ # from datetime import timedelta from textwrap import dedent # The DAG object we'll need this to instantiate a DAG from airflow import DAG # Operators we need this to operate! from import BashOperator from this article, I would like to give you a jump-start tutorial to understand the basic concepts and create a workflow pipeline from scratch.Īpache Airflow is an orchestration tool that helps you to programmatically create and handle task execution into a single workflow. See the License for the # specific language governing permissions and limitations # under the License. You may obtain a copy of the License at # Unless required by applicable law or agreed to in writing, # software distributed under the License is distributed on an # "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY # KIND, either express or implied. The ASF licenses this file # to you under the Apache License, Version 2.0 (the # "License") you may not use this file except in compliance # with the License. See the NOTICE file # distributed with this work for additional information # regarding copyright ownership. # Licensed to the Apache Software Foundation (ASF) under one # or more contributor license agreements.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed